The AI Adoption Illusion

Doing daft things faster

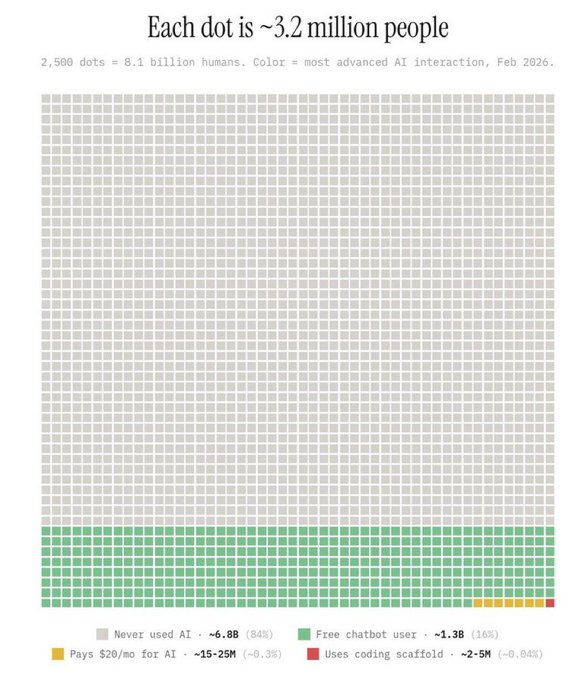

Around one in six people in the world now use generative AI tools.

AI promises transformation.

In reality, many of us are using it to rewrite emails, overthink text messages, and outsource uncomfortable conversations.

Inside organisations, a new metric is emerging: AI adoption.

Dashboards measure prompts per employee.

Readiness scores appear.

Leaders ask whether teams are “using AI enough”.

This misses the point.

AI adoption is an activity metric.

It tells us people are interacting with a tool.

It does not tell us whether anything meaningful is changing.

And confusing activity with progress is a familiar mistake.

Economists have a name for it.

Goodhart’s Law: when a measure becomes a target, it ceases to be a good measure.

If employees know their AI usage is being tracked, the incentive is simple:

use more AI — not use AI better.

A recent viral chart shows where we are in the AI adoption cycle.

Each dot represents millions of people.

The story is simple: most of the world has still never interacted with ChatGPT or Claude.

It looks like the early stages of a global technology shift.

But the obvious question is: so what?

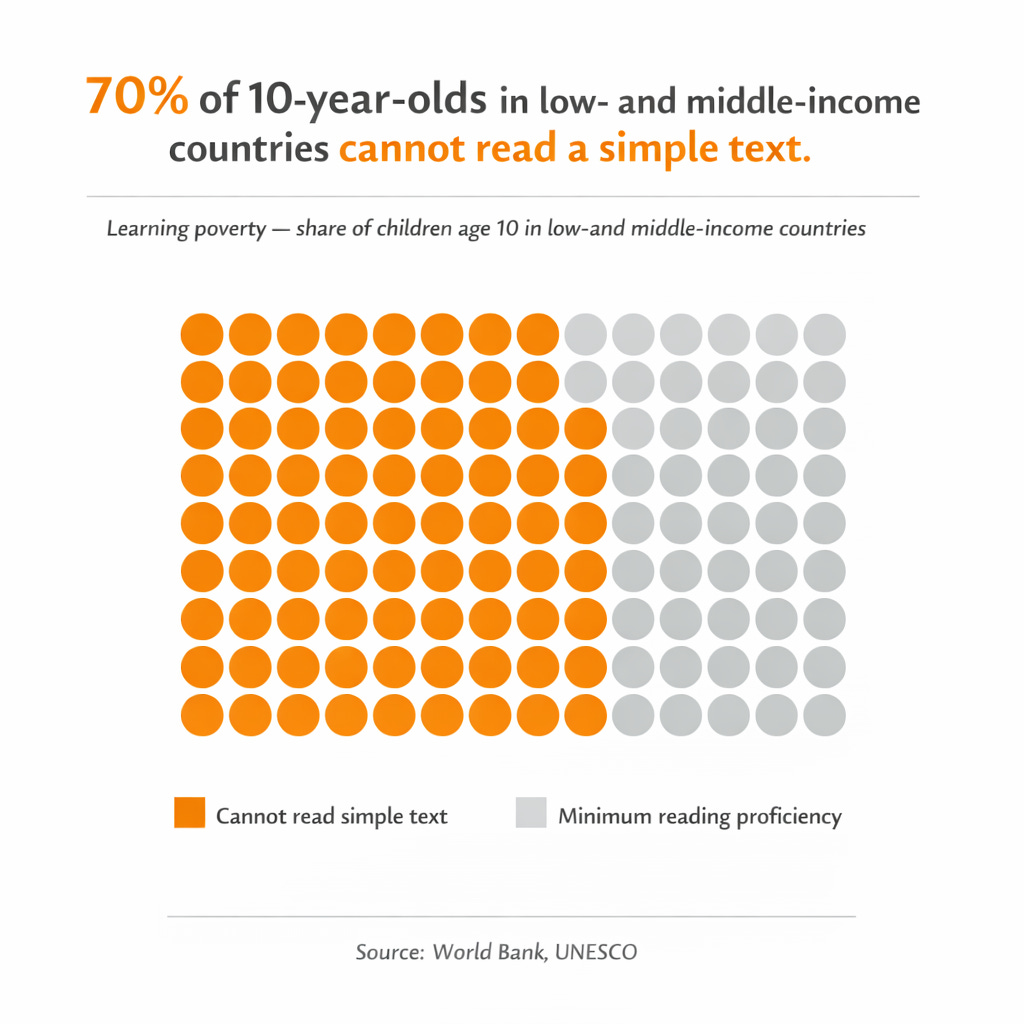

Another statistic tells a very different story.

Learning poverty, the share of children who cannot read and understand a simple text by age 10, sits at around 70% in low and middle-income countries.

Hundreds of millions of children are entering an AI-shaped world without the foundational capability required to use it.

If you cannot read, you cannot meaningfully use AI.

AI usage measures exposure to a tool.

Literacy measures the ability to benefit from it.

AI adoption is a growth metric for technology companies, not for society — and not for employers.

Firms Are Enforcing AI Adoption

Inside companies, the pressure to adopt is intensifying.

Boards expect progress.

Vendors push urgency.

Competitors announce initiatives.

Leaders worry about falling behind.

So organisations start measuring usage.

🎡 Prompts per employee.

🎡 Tokens consumed.

🎡 Adoption dashboards.

The logic is implicit: more usage signals progress.

It doesn’t.

Research is starting to show exactly why.

In one study with Boston Consulting Group, consultants using AI completed tasks faster and produced higher-quality work — until they crossed a boundary.

Beyond that point, performance actually declined.

The researchers called this the “jagged technological frontier” — the invisible line where AI shifts from improving work to quietly degrading it.

Most organisations do not know where that line is.

And they are not measuring it.

There is a second risk.

When people use AI, they tend to trust it — even when they shouldn’t.

Research on automation bias shows that individuals often defer to AI outputs, even when those outputs contradict evidence or their own judgement.

The problem is not just that AI can accelerate bad processes.

It is that AI-assisted decisions can be worse than unaided ones — because people stop checking.

Adoption metrics capture none of this.

Adoption Is Not Transformation

PwC’s global chairman Mohamed Kande recently noted that more than half of companies are seeing little value from AI investments — largely because foundational groundwork is missing.

Organisations are scaling usage before they understand where value actually comes from.

As a shareholder, I don’t care how many times employees used Google, Excel or ChatGPT this week. I care about performance - and ultimately, profits.

None of this underestimates the power of AI.

Productivity gains are real. Experimentation matters. Learning requires deployment.

But history suggests something important: adoption alone does not create transformation.

The question is not:

“How do we implement AI faster?”

Better questions are:

What are we trying to achieve?

What capabilities do we need?

How are customer expectations changing?

Organisations repeatedly confuse deployment with change.

We Have Seen This Before

In HR, we once had paper application forms pages of questions filled in with a black biro.

When the internet arrived, we put the same forms online.

When applications came in, we printed them out and sent them to hiring managers.

With mobile arrived, we shortened the forms - but kept the process.

We used new technology to make old processes faster.

We did not redesign the system.

This is happening again with AI.

Implementing chatbots without redesigning service ends in tears.

Productivity Is Not Transformation

There is a useful distinction here.

Some changes improve existing work.

Others redefine it.

The first is productivity.

The second is transformation.

Most organisations are doing the first — and calling it the second.

History suggests this takes time.

When electricity first entered factories, many simply added it to existing layouts and saw modest gains.

The real transformation came later — when organisations redesigned how work was done around the new technology.

With AI, we are still at the wiring stage - not the redesign.

Doing Smart Things Slower

So the real risk is not that organisations adopt AI too slowly.

It is that they adopt it quickly in the wrong places.

Accelerating processes that should not exist.

Making decisions with less scrutiny.

Measuring activity instead of outcomes.

Doing the wrong things faster is one of the most reliable ways to create the illusion of progress.

So what does a better approach look like?

Experiment — but don’t turn usage into a performance metric

Invest in the few people willing to redesign how work happens

Question the purpose of a process before automating it

Redesign workflows, roles, and decisions before selecting tools

Adoption should follow clarity.

It is not a substitute for it.

Change What Matters

AI will reshape industries, organisations, and learning in meaningful ways.

But the constraint is unlikely to be access to tools.

It will be capability, judgement, and the willingness to redesign work.

We are already seeing the pattern.

We celebrate adoption before capability.

We scale tools before building foundations.

The organisations most likely to succeed with AI are the ones least focused on measuring its adoption.

That is not a comfortable thought when the board wants a dashboard.

The risk is not that organisations fail to adopt AI.

The risk is that they succeed - and nothing that matters actually changes.

Drafting slowly,

What’s interesting is how much of this doesn’t show up as layoffs at all.

I’m seeing more teams just not replace roles when people leave. Same outcome, but slower and harder to notice.

Great piece Andy. 😃 We ran a survey of 1,000 US workers in February 2026 that gets into the behavioral side of this...what workers are actually doing (and hiding) with AI at work.

Some of it is striking: nearly 4 in 10 have submitted fully AI-generated work as their own, and nearly 1 in 5 say their skills are getting worse since using AI. Thought it might be interesting context for your readers: http://novoresume.com/career-blog/ai-at-work-survey 🤔