Where Is My Mind?

Delegation, dependency, and the split self in the age of AI

There are moments we remember forever.

One of these, for me, is the final scene in Fight Club. When “Where Is My Mind” by The Pixies starts, and the corporation buildings that Tyler Durden equipped with dynamite begin to explode and crumble down.

I’ve seen the movie about 28 times (another music quote for my millennial friends - but more of a guilty pleasure), but it was mostly in my teen years. I was very sensitive to the angst and fascination with decay, though not grasping its full-blown accusation of capitalism, consumerism, and corporatism.

So I find it funny that in 2026, the film feels even more relevant than it was in 1999. It popped back in my mind this weekend, as I was chipping away the legs for my game table in one of my precious woodworking sessions, and the song came along on the radio (yes, on the radio). Fresh off having published the last article on OpenClawd (Red Lobster), a question emerged:

“What are we really delegating when we delegate to software?”

It’s been something I already tried to tackle in Enterprise Graph, then in You are training AI, but who gets paid for it?, but that only started to gain ground once I had the opportunity to start really testing this type of technology.

This article is not a tutorial. It’s a small piece of research on what it means to work, think, and decide in a world where AI starts to do it for us. Some things are good, some are bad. What changes, as usual, is how we use it, and if/how governments and corporations decide to build guardrails around it.

The OpenClawd Moment: Buildings Come Down

In the movie, the buildings coming down are Tyler Durden’s way of destroying the infrastructure of a system he despises. In my mind right now, those same buildings resemble something different: jobs and identities being redefined.

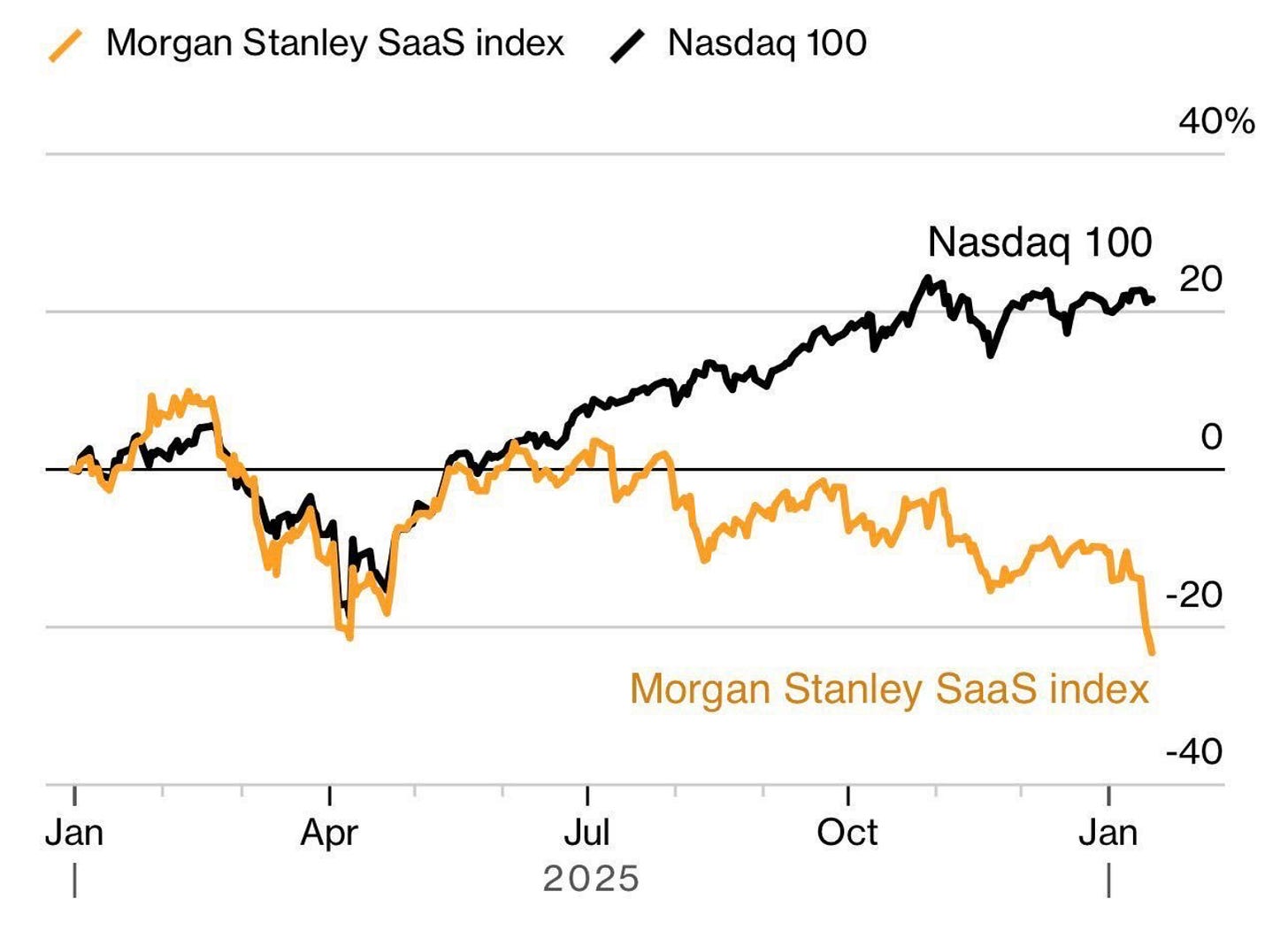

I’ve been talking about this shift for a while, but 2026 is the first year I can see things moving for real. Right now, SaaS companies are watching their stock prices correct as markets price in the possibility that AI can substitute their features. I don’t think it spells the death of all of them, but I do believe we’ll see far more homegrown products and software, especially for small and medium businesses. The building blocks are becoming accessible enough that the middlemen start to look expensive.

But the more personal tremor is this: once you delegate enough, it stops feeling like assistance and starts feeling like outsourcing parts of yourself. Not just the boring parts, not just the admin but the decisions you didn’t make, the messages you didn’t write, the reminders you didn’t remember.

Right now, the experience can be satisfying: it removes friction that was created by two decades of normalised performance and hustle culture (and too many SaaS products). But here’s my prediction: there will be a moment, not so far off, where in your day-to-day you won’t be entirely sure which part of your day you did, and which part your agent handled.

That’s what I mean by the mind being split.

We think of delegation as something external, like “I handed this off.” But it’s going to be closer to internal fragmentation: “Part of me did this, but I didn’t touch it.” Which brings us to the central question (sorry, had to use this image to quote my favorite fictional author here):

Tasks? Attention? Judgment? Identity?

The deeper I go, the more I think it’s all four.

The Four Layers of Delegation

1. Tasks

This is the most obvious layer. We let agents take over scheduling, drafting, filing, searching, summarising. Classic productivity moves, but even here, there’s more at stake than time saved. Research on cognitive offloading (Tsai et al., 2024) shows that repeated delegation of routine tasks leads to decreased recall and weaker task engagement, the so-called “Google Maps effect” applied to work. You save time, but you lose the muscle memory that made the task yours.

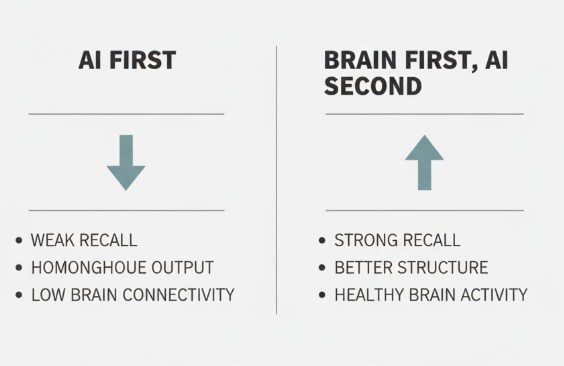

A 2025 MIT study saw students who used ChatGPT to write essays couldn’t produce a single accurate quote from their own writing afterwards. Their brain scans showed weaker connectivity between regions compared to students who thought first and used AI later. The researchers called this “cognitive debt”: you defer effort now, but pay later with weaker critical thinking and poorer recall. David Epstein, writing about the study, framed AI-first work as the opposite of what cognitive psychologists call “desirable difficulty.” He called it an “undesirable ease.” The work gets done, but nothing sticks.

What’s interesting is that students who did their own thinking first and then used ChatGPT performed well. Their essays were better structured, they remembered what they wrote, and their brain activity looked healthy. The tool wasn’t the problem, the sequence was: brain first, tool second. This distinction alone could reshape how we think about delegation at work.

2. Attention

What we automate, we stop paying attention to. When agents filter our inputs, we lose the ambient awareness that comes from skimming an inbox, scanning a feed, or casually checking in. Liu & Pelowski (2023) showed that reliance on LLMs for triaging and prioritisation can lower attentional resilience, which is our ability to maintain context over time. The small, forgettable acts of paying attention turn out to be load-bearing.

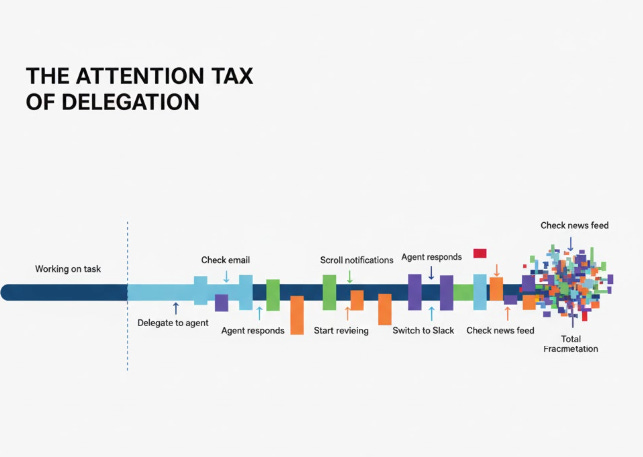

There’s also a subtler attention tax that I don’t see people talking about yet. Even when we delegate to AI, the more complex the task, the longer the agent takes to respond. And in that gap, we do what we’ve been trained to do by a decade of smartphones: we context-switch. We check another tab, glance at notifications, start a different thread. So the tool that was supposed to free our attention actually fragments it further, because we can’t sit still while it works (and it takes an average of 30 minutes to refocus for the human brain). That means that potentially, we’re not saving attention, we’re scattering it across more surfaces.

3. Judgment

The more we delegate prioritisation or decision-making, the more we risk eroding our intuitive judgment. A 2023 Stanford study (But et al., 2023) found that over time, users relying on AI for decision support in ambiguous tasks began deferring more quickly and questioning their own reasoning less. That menas that delegation doesn’t just outsource effort, it can atrophy discernment.

Seneca wrote that “no man is ever wise by chance.” Ryan Holiday, building on this in his recent book Wisdom Takes Work, argues that wisdom isn’t gifted by age or intelligence but earned through repeated effort - it’s a verb, not a noun. The same is true of judgment; you don’t develop it by reading a summary of someone else’s reasoning. You develop it by sitting with ambiguity, making calls, getting some wrong, and refining your instincts over time. If we skip that process and let the tool decide, we don’t just lose time. We lose the reps.

But here’s a question worth sitting with: are all of these layers sequential? Does delegation always start with tasks and slide toward identity, or can someone jump straight to delegating judgment without realising it? I suspect the answer is yes, and that’s what makes this harder to design for than it looks.

4. Identity

This is the most subtle and maybe the most profound: when your agent sends the email, files the doc, writes the first draft, whose voice is it? Work used to be a reflection of your craft, your thinking, your presence. Now, as we shift to directing rather than doing, authorship becomes distributed. When value is produced in invisible layers of automation, how do we measure contribution? And more importantly, how do we maintain a sense of ownership over work that increasingly isn’t done by us?

Each of these layers has a trade-off, and the consequences appear downstream, not immediately. This is why they’re hard to see, but also why it’s worth paying attention now.

So when we say we’re using AI to save time, we should ask: which part of ourselves are we asking it to stand in for?

The Case For Delegation (Before we blow it up)

Before going further into what delegation erodes, it’s worth being honest about what it enables, because the optimistic case is real too.

For many people, delegation doesn’t diminish them: it can free them. A designer who offloads invoice management isn’t losing part of their identity, they’re reclaiming time for the work that is their identity. A founder who automates meeting summaries isn’t diffusing their cognition, they’re concentrating it where it matters most. A neurodivergent professional who uses AI to structure their day isn’t becoming dependent, they’re gaining access to a kind of executive function support that didn’t exist before.

The risk isn’t delegation itself. It’s undiscerning delegation, handing things off without asking what you’re losing in the process. I think the people who will thrive with AI tools tend to be intentional about what they keep and what they release.

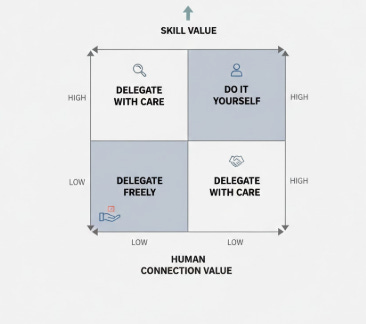

A Simple Filter: The Delegation Compass

When I started being more intentional about what I hand off to AI and what I keep, I found it helpful to run each task through a quick filter. Not a rigid system, more of a compass. Four questions:

1. Is this a skill I want to keep sharp?

If yes, do it yourself, even if AI could do it faster. Writing, strategic thinking, negotiation, creative problem-solving. These are muscles. Outsource them long enough and they atrophy. The convenience isn’t worth the cost if the skill matters to your identity or career.

2. Is this a task where my judgment is the point?

Some tasks exist because they require your specific perspective: reading the room in a client situation, deciding what to prioritise this quarter, choosing the right tone for a difficult message. If the value comes from your judgment, delegating it isn’t efficiency.

3. Would I notice if this was done poorly?

If your agent summarises a meeting badly or sends a slightly off-tone email, would you catch it? If yes, delegation works, you’re still the quality filter. If no, you’ve created a blind spot. The more invisible the delegation, the more dangerous it is.

4. Does doing this connect me to people or context I’d otherwise lose?

Sometimes the “inefficient” task, like scanning the inbox, joining the standup, writing the update yourself, is actually how you stay plugged into what’s happening around you. Automate it away and you might save thirty minutes but lose the peripheral awareness that makes you effective.

In practice, I’ve found that most people over-delegate on questions 2 and 4, and under-delegate on the genuinely mechanical work where AI shines and nothing meaningful is lost.

From Delegation to Dependency

We start by automating friction, but friction often served a function.

Just like GPS replaced our need to build spatial maps in our head, and in turn weakened our ability to navigate unaided, software agents may dull our sense of sequence, priority, and even memory. We begin to use the tool not just to do more, but to remember how to do at all.

A 2025 study published in The BMJ examined death certificates from nearly 9 million people across 443 occupations and found that taxi drivers and ambulance drivers, who do constant real-time wayfinding, had the lowest risk of dying from Alzheimer’s. Bus drivers and pilots, who follow predetermined routes, showed no such benefit. The cognitive effort of navigating in real time, the daily difficulty of figuring out where to go, appeared to protect the brain. When David Epstein asked one of the study’s co-authors whether GPS might erode this benefit for future drivers, the response was telling: “The now ubiquitous use of GPS could, in theory, mitigate the spatial memory based benefits that prior generations of taxi or ambulance drivers may have developed.” The convenience removes the challenge, and with it, the benefit.

I notice this in small ways. I catch myself reaching for the agent before I’ve even tried to think through a problem - not because I can’t, but because the path of least resistance has shifted. Dependency grows not from a single choice, but from repeated relief.

We also become dependent not just functionally, but psychologically. We get used to instant access, always-on assistants, smart recommendations. I see it happening when my son scrolls endlessly through shows, not because he’s engaged, but because he’s come to expect that something better is always one swipe away. In us adults, it takes other forms: jumping between tabs, cycling through to-do apps, refreshing notifications. These aren’t failures of discipline. They’re symptoms of abundance. Our brains evolved for scarcity, and the sudden overload of delegation, information, and options doesn’t create clarity. It creates craving.

The Multiplication of Selves

Once delegation is normalised, and dependency starts to take hold, something new kicks in: We aren’t just outsourcing parts of our cognition, we are multiplying them.

One you drafts strategy. Another executes ops. One handles outreach, another files reports. You’re still in the loop, but your cognitive bandwidth is split across different contexts, each running in parallel.

In Fight Club, Tyler Durden and the narrator are the same person, a split mind acting across incompatible realities, fractured by contradictory roles and desires. And that was 1999, a metaphor for industrial-era alienation.

What happens when work actually mirrors that? Because here’s where I think we’re heading: as AI drives productivity up, expectations rise in parallel. People are asked to manage more workstreams, shift faster between strategic and tactical tasks, switch hats more often. Not because it’s healthy, but because now it’s possible.

And this creates a vicious cycle that I’m already starting to feel.

The faster you work, the more you’re expected to produce in the same amount of time. So you delegate more to keep up. But now you’re delegating things that didn’t need to be delegated, things you would have been better off doing yourself, because the pressure to output has outpaced your ability to be thoughtful about what you hand off. The quality of your thinking degrades not because you’re lazy, but because you’re running to keep up with a pace that your own tools set. You start producing what I’d call slop: work that technically got done but that nobody, including you, actually thought through. And the worst part is, the faster cycle makes it harder to notice, because you’re already on to the next thing.

When your workday is a rapid shuffle between six mental states (editor, analyst, manager, recruiter, planner, fixer) you start to lose track of which version of you is the real one. Or if they all are. Or none.

This fragmentation intersects with something we don’t talk about enough in productivity conversations: loneliness.

We are already living through a social crisis, exacerbated by digital platforms, remote work, and growing individualism. If your systems know you better than your colleagues do, and if your assistant is your primary collaborator, what happens to the communal fabric of work?

There’s a question here that genuinely unsettles me: when you get used to asking AI for everything, and expecting immediate, exhaustive answers, can you still switch to human communication with its pauses, its ambiguity, its need for empathy and patience?

Because human interaction is slow - it’s inefficient, it requires tolerating not-knowing, sitting with discomfort, building understanding over time. If our baseline shifts to the speed and precision of AI responses, the friction of human conversation might start to feel like a bug rather than a feature.

Who Controls the Extended Mind?

In 1998, philosophers Andy Clark and David Chalmers proposed a radical idea in their essay The Extended Mind. The thesis was simple but profound: if we regularly use external objects, like notebooks or devices, to store and process information, those objects should be considered part of our cognitive system. Thinking doesn’t stop at the skin. It stretches into the world.

When that world includes always-on agents, auto-completing systems, and task-oriented memories, the boundary between brain and tool gets even blurrier. If your assistant finishes your sentence, prioritises your day, and summarises your calls, is that still you thinking? Or are you co-thinking with a machine?

And if part of your cognition happens in a tool you don’t fully own, like an enterprise LLM or a rented platform, who owns the thinking?

We are entering a phase where parts of our minds are embedded in rented software, shaped by algorithms, monitored by employers, and retrained on our patterns of thought. A note you wrote in Notion might be semi-private. An email draft written with AI might live in training logs. A decision made through a delegated workflow might leave behind a traceable record, even if you never typed it yourself.

Designing the Split

We are living in the equivalent of what 2007 was for social media. The beginning, but also, potentially, the beginning of the end of something we haven’t yet learned to name. The end of undivided attention, perhaps. Or the end of unsupervised cognition.

Can we stop, think, and design things right this time around? Or, much like all of human history, will we need to hit a wall before we start to scale back?

I don’t think those are the only two options, but I do think the window for the first one is smaller than we’d like to believe. The tools are moving fast. The habits are forming faster. And the questions this piece has tried to raise, about what we delegate, what we lose, and who owns the parts of us that live inside machines, are not ones we can afford to answer by default.

So here’s what I’d leave you with. Not a prediction, but an invitation:

Pay attention to what you’re handing off. Not just the task, but the thinking behind it. Notice when delegation stops feeling like a choice and starts feeling like a reflex. That’s the edge worth watching.

Until next week

Matteo

I read your piece after reading this post this morning: https://shumer.dev/something-big-is-happening. The irony, neither was on my agenda when I woke up, but both distorted my day into a meld of "deep thinking" about how I was navigating human calls today, with the AI brainstorm I had last night with myself about an "emotional operating system" I was designing to achieve alignment across an organization that I consult with. Time to jump on my Peloton and embrace another screen with a different purpose.

Excellent piece...so important to ponder all this! I have been confronting exactly these issues